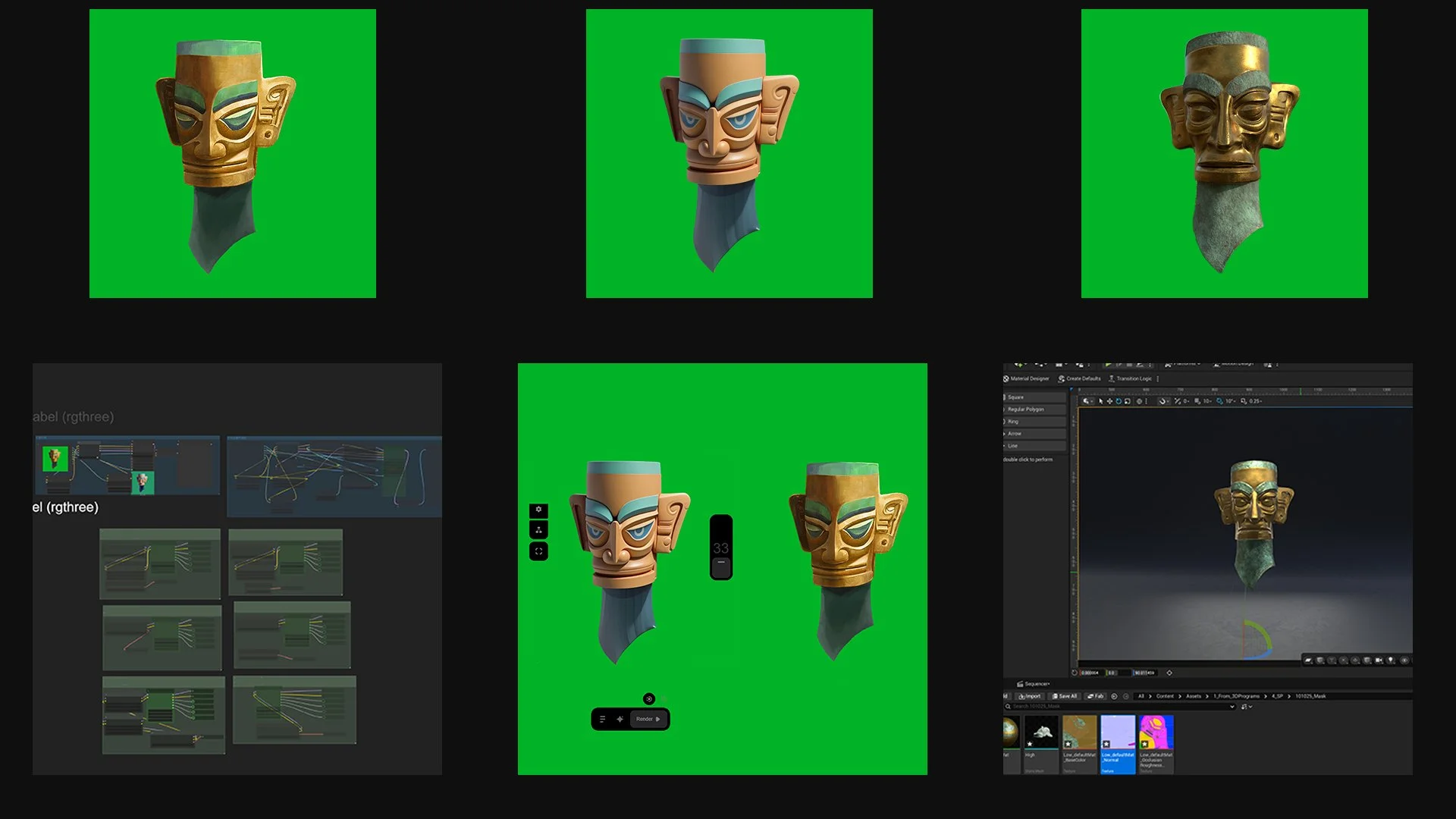

I design my 2D concepts in Photoshop, then use a ComfyUI setup with Nano Banana Live Link to generate 3D-like images directly from my paintings. From there, I use ComfyUI or TripoAI to produce an initial 3D base model that captures the form, proportion, and design intent of the concept.

These AI-generated models serve as a foundation — I bring them into ZBrushto refine the sculpt, fix topology, and enhance anatomical or structural accuracy. Afterward, I texture in Substance Painter, creating realistic materials and full PBR detail. The final assets are production-ready and integrated into Unreal Engine for cinematic and real-time use.

This hybrid workflow merges AI-assisted ideation with traditional craftsmanship, significantly reducing production time while preserving full artistic controlfrom concept to final render.